Linear Regression Calculator

Perform linear regression analysis with slope, intercept, R-squared, and prediction intervals for data sets

Enter equal numbers of X and Y values separated by commas. Both lists must have the same length.

Regression Equation

Slope (m)

Intercept (b)

R² (Coefficient of Determination)

r (Correlation Coefficient)

Additional Statistics

Number of Points

Mean of X

Mean of Y

Standard Error of Estimate

Standard Error of Slope

Standard Error of Intercept

Prediction

Interpretation

Data Points & Residuals

| # | X | Y (Observed) | Ŷ (Predicted) | Residual (Y - Ŷ) |

|---|

About Linear Regression

Linear regression is a statistical method used to model the relationship between a dependent variable (Y) and one independent variable (X) by fitting a straight line to the observed data. The method of ordinary least squares (OLS) finds the line that minimizes the sum of the squared differences between observed and predicted values.

The Regression Equation

ŷ = mx + b

- ŷ — the predicted value of the dependent variable

- m — the slope (rate of change of Y per unit change in X)

- x — the value of the independent variable

- b — the y-intercept (value of Y when X = 0)

Key Statistics Explained

- R² (Coefficient of Determination): Measures the proportion of the variance in Y that is explained by X. Ranges from 0 to 1, where 1 indicates a perfect fit.

- r (Correlation Coefficient): Measures the strength and direction of the linear relationship between X and Y. Ranges from -1 to +1.

- Standard Error of Estimate: Measures the average distance that the observed values fall from the regression line.

- Residuals: The differences between the observed Y values and the predicted Ŷ values. In a good model, residuals should appear randomly scattered around zero.

How the Slope and Intercept Are Calculated

- Slope (m): m = Σ[(xᵢ - x̄)(yᵢ - ȳ)] / Σ[(xᵢ - x̄)²]

- Intercept (b): b = ȳ - m · x̄

- R²: R² = 1 - (SS_res / SS_tot), where SS_res = Σ(yᵢ - ŷᵢ)² and SS_tot = Σ(yᵢ - ȳ)²

Interpreting R² Values

Strong Fit (R² > 0.7)

The model explains a large proportion of the variance in Y. The linear relationship is a good description of the data.

Moderate Fit (0.4 ≤ R² ≤ 0.7)

Some of the variance is explained, but other factors or a non-linear model may provide a better fit.

Weak Fit (R² < 0.4)

The linear model does not explain much of the variance. Consider other variables or a different model type.

Perfect Fit (R² = 1)

All data points fall exactly on the regression line. This is rare with real-world data and may indicate overfitting.

Assumptions of Linear Regression

- Linearity: The relationship between X and Y is linear

- Independence: Observations are independent of each other

- Homoscedasticity: The variance of residuals is constant across all levels of X

- Normality: Residuals are approximately normally distributed (important for inference)

Common Applications

Business & Economics

- • Forecasting sales from advertising spend

- • Predicting revenue based on market size

- • Estimating cost functions

- • Supply and demand modeling

Science & Engineering

- • Calibration curves in chemistry

- • Dose-response relationships

- • Physical law validation

- • Environmental trend analysis

Social Sciences

- • Education outcomes vs. study hours

- • Health metrics and lifestyle factors

- • Population growth trends

- • Survey data analysis

References

The formulas and methodology used in this calculator are based on established statistical theory from the following sources:

- NIST/SEMATECH e-Handbook of Statistical Methods — Linear Least Squares Regression

- Yale University Department of Statistics — Linear Regression

- Penn State STAT 501 — Simple Linear Regression

- Khan Academy — Linear Regression Review

- Freedman, D., Pisani, R., & Purves, R. (2007). Statistics (4th ed.). W.W. Norton & Company.

- Montgomery, D. C., Peck, E. A., & Vining, G. G. (2012). Introduction to Linear Regression Analysis (5th ed.). Wiley.

Related Calculators

Note: This calculator uses the ordinary least squares (OLS) method for simple linear regression. Results are valid for linear relationships. For non-linear data, consider polynomial or other regression models. Always verify that linear regression assumptions are met before drawing conclusions.

Recommended Calculator

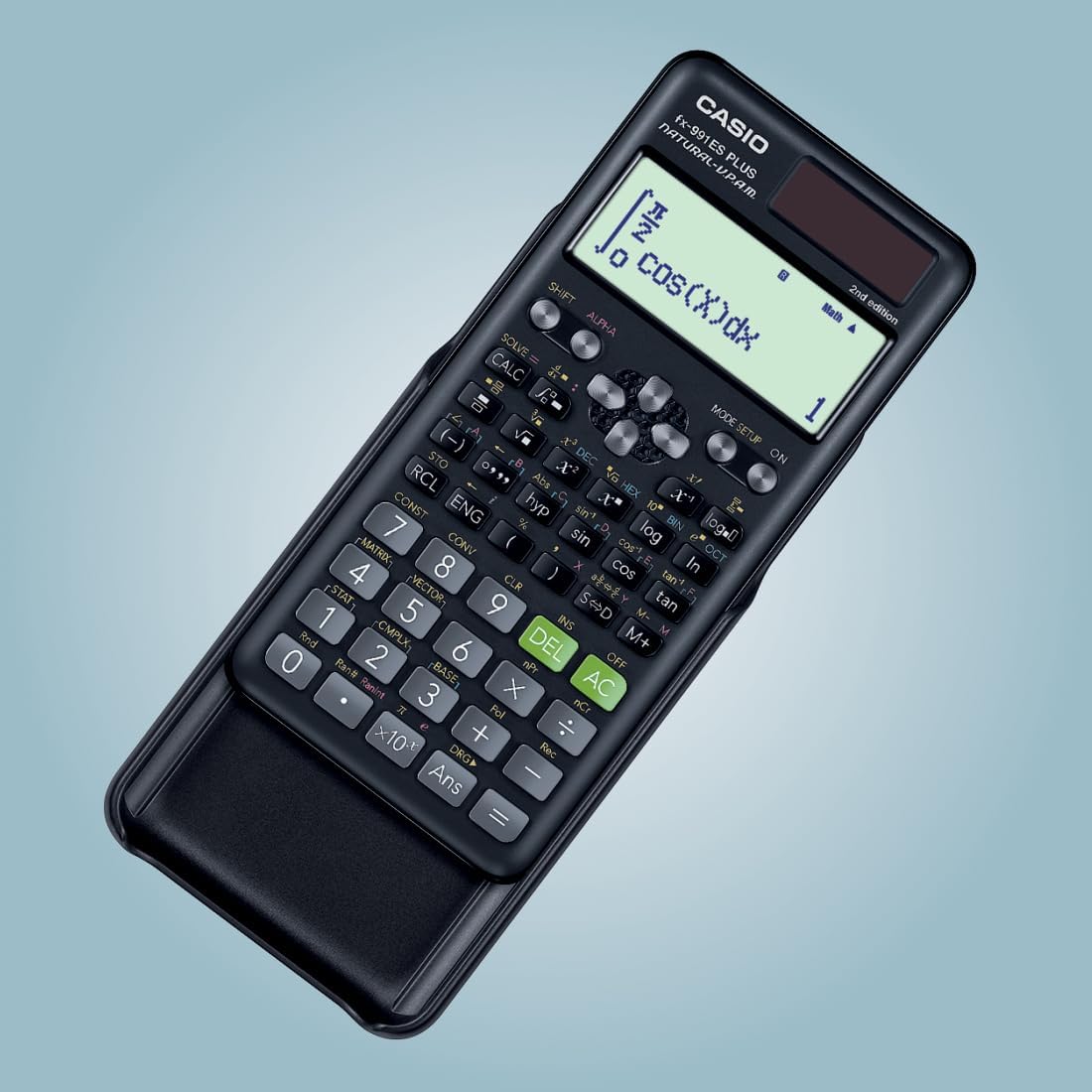

Casio FX-991ES Plus

The professional-grade scientific calculator with 417 functions, natural display, and solar power. Perfect for students and professionals.

View on Amazon